AI in Cybersecurity: Why the Human in the Loop Is the Architecture, Not a Bottleneck

Only 2.4% of AI agent activity is in cybersecurity. Why the human in the loop remains the most critical layer in modern security defense.

- Ai

- Industry

- Career

- Security Operations

- Thought Leadership

TL;DR

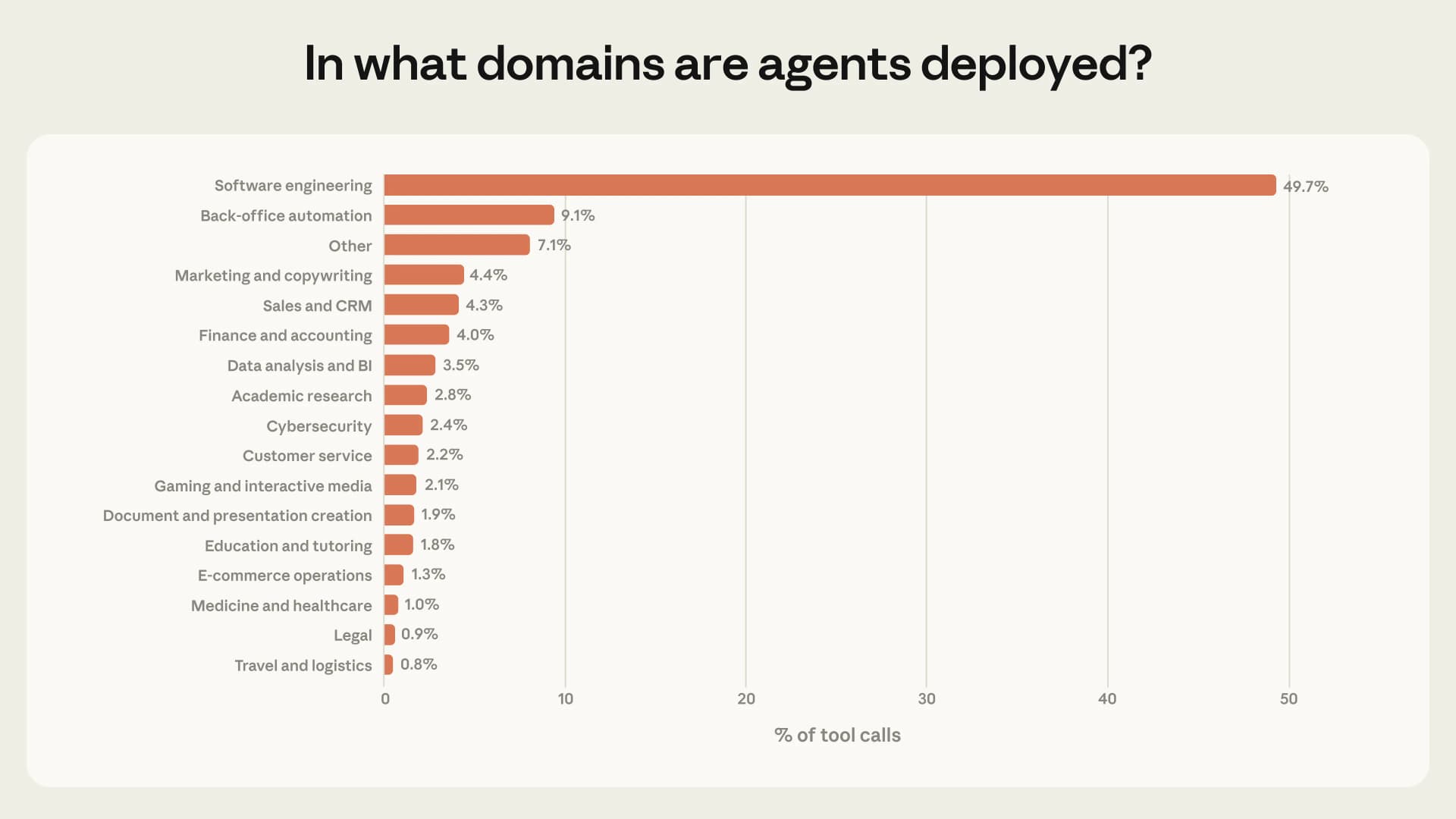

Anthropic's 2026 research on AI agent deployment reveals that cybersecurity accounts for only 2.4% of all agent tool calls, while software engineering dominates at 49.7%. This is not a failure of adoption. It is a signal of maturity. Security is an adversarial domain where human judgment carries accountability, signals are deliberately manipulated, and the difference between a false alarm and a nation-state intrusion rests on experience that cannot be serialized and handed to a machine. The human in the loop is not a limitation. It is the architecture.

The Chart That Should Surprise You

There is a chart making the rounds from Anthropic's latest research on how AI agents are actually being deployed across industries. Software engineering sits at the top. 49.7% of all agent tool calls. Nearly half. Below it, the list drops fast: back office automation at 9.1%, marketing at 4.4%, data analysis at 3.5%.

And then, somewhere near the bottom, almost easy to miss: Cybersecurity. 2.4%.

That number should surprise you. Not because cybersecurity is somehow unimportant. It is arguably the most consequential domain on the list. But because the gap between how much AI is being used in security and how much it is being talked about in security is enormous. Every conference, every vendor pitch, every LinkedIn post promises AI driven threat detection, autonomous incident response, machine speed defense.

And yet, when you look at where agents are actually working, cybersecurity barely registers.

There is a reason for that. And the reason is more interesting than the number.

Why Cybersecurity Resists AI Automation

Cybersecurity is not like software engineering.

In software engineering, the feedback loop is clean. You write code. It compiles or it does not. Tests pass or they fail. The output is deterministic enough that an agent can iterate autonomously: write, test, fix, repeat. That is why nearly half of all agent activity lives there. The loop is tight. The signal is clear.

Security does not work that way.

In security, the signal is adversarial. Someone is actively trying to make the data misleading. The attacker's entire job is to make their activity look like normal traffic, like legitimate access, like a routine log entry.

An AI agent can scan a million log entries in seconds. That is genuinely useful. But deciding whether the anomaly in entry 847,321 is a misconfigured service, a routine edge case, or the first indicator of a nation-state intrusion requires something models do not have: situated judgment.

The kind of judgment that comes from a human analyst who has seen three incidents that started exactly like this. Who knows that this particular network segment was restructured last Tuesday. Who remembers that the same IP range showed up in a threat briefing two weeks ago. And who has the institutional authority to escalate or dismiss based on a synthesis of context that no model can access, because it lives in the analyst's experience, not in the data.

Consider what happens inside a Security Operations Center at 3 AM. A SIEM platform flags a lateral movement pattern between two internal hosts. The AI triage layer scores it as medium severity based on historical baselines. A junior analyst would follow the playbook: isolate, escalate, document. But the senior analyst on shift recognizes those two hosts. One belongs to the infrastructure team running an authorized migration that was announced in a Slack channel three days ago. The other is a staging server that always generates anomalous traffic during deployment windows. She closes the ticket in forty seconds. No playbook covered this. No model had access to the Slack message, the migration schedule, or the institutional memory of what "normal" looks like for those two specific machines during that specific week.

That is situated judgment. And it is the reason cybersecurity resists the kind of end to end automation that works so well for compiling code or running regression tests.

The Sentinel: An Anthropology of Cybersecurity Vigilance

Every human society that has ever existed has had watchers. Sentinels. People whose role was not to build the walls or till the fields, but to stand at the edge of the settlement and look outward into the dark.

This role was never purely technical. The sentinel did not simply observe. The sentinel interpreted. A rustling in the grass could be wind, an animal, or an approaching enemy. The difference between those three interpretations was not a matter of better sensors. It was a matter of accumulated knowledge about this specific field, this season, this pattern of raids, this enemy's expected behavior. The sentinel's value was not their eyesight. It was their judgment, crafted by living inside a context that no outsider, no matter how perceptive, could replicate from data alone.

When we automate the watching, we do not get a better sentinel. We get a faster sensor. Those are not the same thing.

SOC analysts are the sentinels of the networked world. And what Anthropic's data is quietly confirming is something that anthropologists have understood for millennia: the watching role resists mechanization because it is not fundamentally a perception task. It is a meaning-making task. The sentinel does not just see. The sentinel decides what the seeing means.

And that decision draws on layers of cultural, institutional, and experiential knowledge that cannot be extracted, serialized, and handed to a machine.

The Accountability Problem with Autonomous AI Security

There is another dimension to this that the "AI everywhere" narrative consistently ignores.

When a security decision is wrong, someone answers for it. If a threat is dismissed and it turns into a data breach, there is an investigation. There is a timeline. There is a human whose judgment is reviewed, questioned, and, if necessary, held accountable. This is not a bug in the system. This is how security governance works. The accountability chain is what makes the entire system function.

Remove the human from the loop and you do not just lose judgment. You lose accountability. And a security system without accountability is not a security system. It is a liability generator.

Every society that has dealt with consequential decisions, from tribal councils to modern courts, has understood that the weight of a decision must rest on a person. Not because people are infallible, but because the social contract of accountability is what gives the decision legitimacy. A judge who makes a wrong call can be appealed. An algorithm that makes a wrong call can only be patched. These are not equivalent. One is justice. The other is maintenance.

How Organizations Actually Deploy AI in Security

Before dismissing that 2.4% figure as the whole story, it is worth understanding what is happening inside the organizations that are using AI in their security stack.

The numbers are not small. In 2026, 77% of organizations report using generative AI or large language models somewhere in their security operations. And 67% have deployed some form of agentic AI for autonomous or semi-autonomous security tasks. The technology is present. The question is how it is being governed.

The answer is revealing: 70% of organizations run a human in the loop model. AI recommends. A person approves. Only 14% allow AI to take independent remediation actions with no human oversight. The remaining organizations fall somewhere between, with varying levels of automation gated behind human checkpoints.

This is not timidity. This is engineering discipline. Organizations automate where precision is high and consequences are contained. Automated blocking of known malware signatures. Automated quarantine of phishing emails matching established patterns. Automated patch deployment for low risk vulnerabilities. These are the tasks where the feedback loop is tight enough for machines to operate independently.

But the moment a decision touches critical systems, legal implications, or ambiguous threat data, the human steps back in. Containment decisions affecting production infrastructure. Access revocations for privileged accounts. Escalation calls that trigger regulatory notification requirements. These are the moments where the cost of a wrong automated decision exceeds the cost of waiting thirty seconds for a human to review.

The industry has not rejected AI. It has placed AI exactly where it belongs: as the fastest layer in a defense architecture where the most consequential layer remains human.

The 84% Reality: How Many People Actually Use AI?

Now zoom out further.

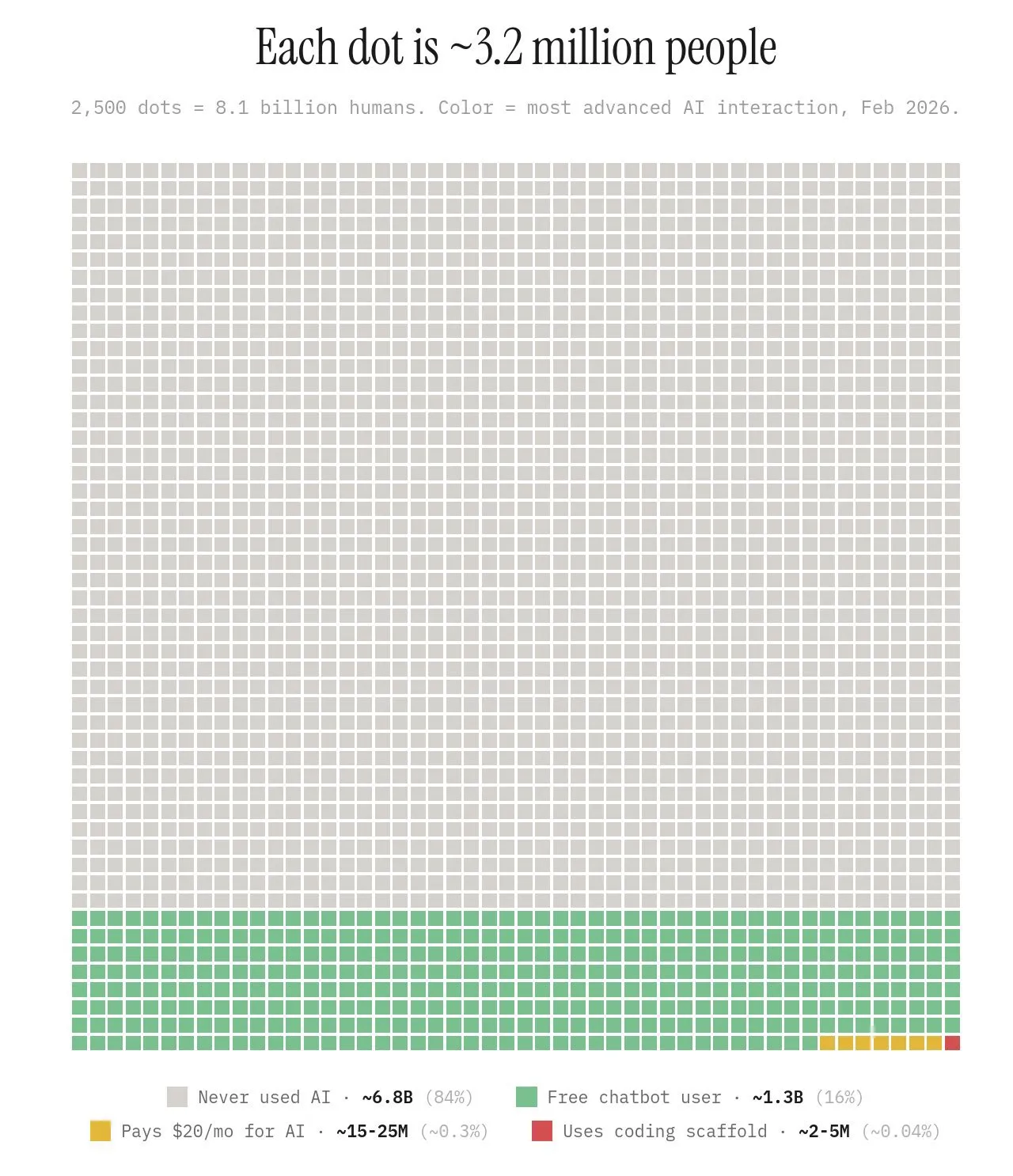

There is another visualization circulating, a dot matrix representing the entire global population. 8.1 billion people. Each dot is 3.2 million humans. The colors tell the story:

- 84% have never used AI at all. 6.8 billion people who have never typed a prompt.

- 16% are free chatbot users.

- Roughly 0.3% pay for AI.

- Approximately 0.04%, somewhere between 2 and 5 million people on the entire planet, use coding scaffolds.

The entire global conversation about AI transforming everything is being driven by the experience of less than 1% of the world's population. The security professionals deploying AI agents, the engineers building automated defenses, the analysts whose workflows have been restructured, they are a rounding error in the human story.

This is not an argument against AI's importance. It is an argument for proportion. When someone says "AI is changing cybersecurity", they are describing a phenomenon that affects a vanishingly small slice of humanity, discussed by an even smaller slice, and deployed by a smaller slice still. The urgency may be real. The scale of current impact is not what the narrative suggests.

Throughout history, transformative technologies have always been narrated by the tiny minority who use them first, creating a perception of universality that does not match the lived reality of most people. The printing press was changing everything for decades before most Europeans had held a book. Electricity was the future while most of the world still cooked over fire. We are living inside that same distortion right now, except the speed of the narrative has outpaced the speed of the adoption by the widest margin in recorded history.

What AI in Cybersecurity Means for Your Career

If you are studying cybersecurity, or working in it, or considering it, here is the honest picture.

AI will change your tools. The scanning, the log analysis, the initial triage, the vulnerability enumeration. These are being automated and will continue to be. If your plan is to spend a career doing what an agent can do in seconds, revise the plan.

AI will not replace your role. Not because the technology cannot improve. It will. But because the nature of the adversarial problem means that the thing being defended against is also using AI. This creates an arms race where human judgment is not a bottleneck. It is the tiebreaker. The attacker has AI. The defender has AI. The difference is the human who decides what to do with what the AI surfaces.

The skills that matter are shifting. Rote log review is disappearing. Manual vulnerability scanning is already gone. What is growing is the demand for professionals who can interpret AI generated findings, who can distinguish between an AI system crying wolf and an AI system that has found something genuinely novel, who can design the policies that govern what AI is allowed to do autonomously and where human gates must remain. Threat hunting, incident response leadership, security architecture, and AI governance are where the career paths lead now.

Our species has always needed its sentinels. The tools they carry change with every era. The role does not. The person at the edge of the settlement, looking into the dark, deciding what the shapes mean. That is an ancient job. It is also yours.

Learn the tools. Use the models. Automate what can be automated. But build yourself around the part that cannot be: the judgment, the context, the accountability, the willingness to stand in the gap between what the machine sees and what it means.

That is the job. The machines make it faster. They do not make it unnecessary.

They never will.

Daute built Unihackers after a decade defending airlines, managed SOCs and international organisations. He is an Associate C|CISO and a regular voice on AI and cybersecurity in international media. Silver Winner at the 2021 Cyber Security Excellence Awards. He teaches the way he wishes someone had taught him: skip the noise, train on what attackers actually do, and graduate people who are useful from day one.

View ProfileReady to Start Your Cybersecurity Career?

Join hundreds of professionals who've transitioned into cybersecurity with our hands-on bootcamp.